Pre-training is plateauing. The next frontier of AI isn't making models bigger — it's making them better after training, through reinforcement learning. We're watching the "AWS moment" for reinforcement learning unfold in real time.

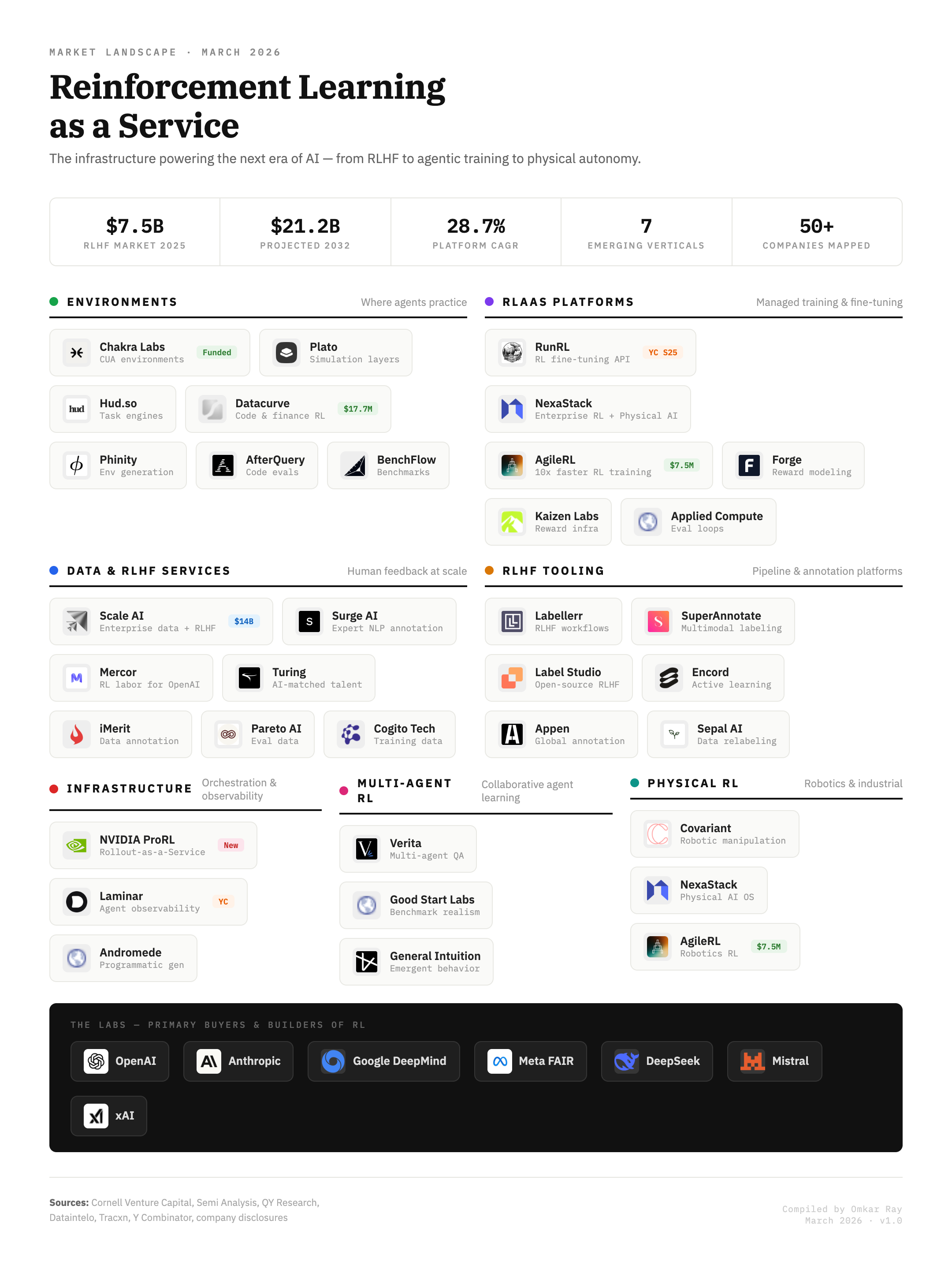

Every frontier lab — OpenAI, Anthropic, DeepMind, Meta, DeepSeek, Mistral, xAI — is now a buyer of RL infrastructure. The market is $7.5B today, growing to $21B+ by 2032. But only ~5 labs are buying today. When RL goes enterprise — and with agentic AI projected to hit $90B in 2026, it will — the TAM expands 100x.

The Structural Shift

Pre-training hit the wall. Post-training is the new game.

For five years (2020–2025), the AI industry operated under one belief: scale is all you need. More data, more compute, more parameters, better models. This was the pre-training era. That era is over.

Ilya Sutskever — the person most responsible for the scaling hypothesis — declared in late 2025 that "the age of scaling has ended." His reasoning:

1. High-quality internet text is finite. We've consumed most of it. Synthetic data helps, but has diminishing returns.

2. Compute scaling has flattening curves. Doubling GPUs no longer doubles capability. The gains are logarithmic, the costs are linear.

3. Benchmarks ≠ intelligence. Models ace tests but fail at genuine generalization. More scale won't fix architectural limitations.

So where does improvement come from now? Reinforcement learning. Specifically, post-training RL — where you take a pre-trained model and teach it to reason, plan, and act through trial-and-error in interactive environments.

This is how ChatGPT was made useful (RLHF). This is how DeepSeek R1 learned to reason. This is how every future agent will learn to operate in the real world.

Era 1 (2020–2024): Pre-training → "Make the model bigger"

Era 2 (2025–????): Post-training → "Make the model better with RL"

This isn't a trend. It's a paradigm change. And it creates an entirely new infrastructure layer that doesn't exist yet.

Why Now

Five forces converging to make RL infrastructure investable right now.

1. The ChatGPT Proof Point (2022)

ChatGPT wasn't just a larger model. It was GPT-3.5 + RLHF. The technique that made it useful — that turned a text predictor into something that could follow instructions, refuse harmful requests, and have coherent conversations — was reinforcement learning from human feedback. This was the first proof that RL post-training was the difference between a toy and a product.

2. DeepSeek R1 Democratizes RL (2024)

When DeepSeek open-sourced R1 and showed you could achieve near-frontier reasoning with GRPO (a cheaper RL algorithm) on open models, the floodgates opened. Suddenly every AI lab and enterprise could do RL post-training — they just needed the tools.

This is the "Linux moment" for RL. The technique is open. The infrastructure to use it at scale is not.

3. Agentic AI Creates Unbounded Demand (2025–2026)

Agentic AI — autonomous systems that take actions, not just generate text — is projected to be a $90B market in 2026 (215% growth YoY). 78% of Fortune 500 companies will deploy agents this year. Every agent needs to be trained through RL. Static supervised learning can't teach an agent to navigate a browser, negotiate a price, or recover from errors.

Agentic AI is the demand engine. RL infrastructure is the supply.

4. NVIDIA Enters (March 2026)

When NVIDIA launches a product in a category, the category is real. NVIDIA ProRL Agent — "Rollout-as-a-Service" — decouples rollout generation from model training. This is NVIDIA saying: RL infrastructure is a platform, and we're building the picks-and-shovels layer.

5. Cost of Human Data Is Exploding

Training datasets now cost 10× to 1,000× more than the compute to train models. GPT-4's dataset cost an estimated 300× its training compute. Scale AI's revenue is projected to double to $2B in 2025. The RL supply chain — data labeling, reward modeling, evaluation, environments — is the new bottleneck. And bottlenecks create platform opportunities.

The Market

Size, growth, and the 7 verticals forming the RL infrastructure stack.

| Vertical | What it does | Key players | Moat type |

|---|---|---|---|

| Environments | Where agents practice | Chakra, Datacurve, Hud.so, Plato | Data + realism |

| RLaaS Platforms | Managed training APIs | RunRL, AgileRL, NexaStack | Workflow lock-in |

| Data & RLHF | Human feedback at scale | Scale AI, Mercor, Surge AI | Network + relationships |

| RLHF Tooling | Annotation pipelines | SuperAnnotate, Label Studio, Encord | OSS community |

| Infrastructure | Orchestration layer | NVIDIA ProRL, Laminar | Ecosystem + compute |

| Multi-Agent RL | Cooperative agent training | Verita, General Intuition | Research IP |

| Physical RL | Robotics + industrial | Covariant, NexaStack | Hardware integration |

Today: ~5–7 frontier labs buying RL infrastructure. Tomorrow: Thousands of enterprises. Gartner projects 40% of enterprise applications will embed task-specific agents by end of 2026 (up from <5% in 2025). Each of those agents needs RL training infrastructure.

The Landscape

50+ companies mapped across seven layers — from where agents train, to who orchestrates the loop.

The Investment Framework

Where value accrues — not all layers are created equal.

Environments

If you control where agents practice, you control what they learn. Environments are the "training data" of the RL era — without them, nothing works. Realistic environment creation is hard. You need domain expertise, real-world fidelity, and constant updating. This isn't commoditizable.

Signal: Chakra Labs building pixel-perfect browser clones. Datacurve raising $17.7M for code RL environments. Halluminate (YC S25) entering the space.

Analogy: Environments are to RL what training data was to supervised learning — the single biggest leverage point.

RLaaS Platforms

"Stripe for RL." Abstract away the complexity of reward function design, training orchestration, and evaluation. Make it an API call. Workflow lock-in creates enormous switching costs.

Signal: RunRL (YC S25) — usage-based pricing, $80/node-hour. AgileRL raising $7.5M with "10× faster training" claim.

Risk: NVIDIA ProRL could commoditize this layer. But platforms that own the developer experience will survive, just as Vercel survived despite AWS.

Data & RLHF Services

Picks and shovels. Every model needs human feedback. The market is growing because AI is growing. Scale AI at $14B valuation, $2B projected revenue. RLHF data costs 10–1,000× compute costs.

Risk: Synthetic self-play could reduce demand for human feedback. But we're at least 3–5 years away from this being viable at scale.

Multi-Agent + Physical RL

Cooperative agents and real-world robotics represent the long-term vision of RL. Massive TAM, but earlier stage. Deep technical IP and hardware integration required.

Signal: NVIDIA entering physical RL. Covariant proving robotic RL works.

The "Stripe for Evals" Gap: The single biggest opportunity in this market is the company that builds the horizontal RL training platform — the one that makes it as easy to run an RL training loop as it is to process a payment. This company doesn't exist yet. Whoever nails the developer experience will own this market.

The Risks

Four scenarios that could slow or break the thesis.

Lab Oligopoly

Probability: 25%OpenAI, Anthropic, and DeepMind build everything in-house. RL infrastructure becomes a feature, not a product. The startup layer gets squeezed.

Why it's unlikely to play out fully: Labs are talent-constrained and time-constrained. They'll build core training internally but outsource environments, data, and tooling — exactly what happened with cloud.

Synthetic Self-Play

Probability: 15% by 2028Agents learn to train other agents. Human feedback becomes unnecessary. The entire RLHF services layer collapses.

Why it's a distant risk: Current research shows synthetic data degrades after a few generations. Human-in-the-loop feedback is still necessary for alignment, safety, and domain-specific accuracy. This is a 5–10 year risk.

Fragmentation

Probability: 30%Dozens of niche RL shops. No platform winner emerges. Thin margins everywhere. The market looks more like "AI consulting" than "AI infrastructure."

Why it's manageable: Platform effects naturally consolidate. The developer experience winner will pull away, just as Stripe did in payments and Twilio did in communications.

Regulatory Intervention

Probability: VariableGovernments regulate RL training (especially for autonomous agents) in ways that slow adoption.

Why it's manageable: Regulation typically creates moats for compliant platforms. The companies that build compliance into their infrastructure will benefit.

The Analogy

We're in 2008 for RL infrastructure.

────────────────────────────── ───────────────────────────

AWS launches S3 + EC2 → NVIDIA launches ProRL

Only tech companies use cloud → Only AI labs use RL infra

"Why would I rent servers?" → "Why would I outsource training?"

$16B market (2008) → $10B market (2026)

$600B market (2023) → $???

Heroku, Vercel, Stripe emerge → RunRL, AgileRL, Chakra emerge

Every company becomes a tech co. → Every company will train agents.

The primitives exist. The developer tools are emerging. The platform winners haven't been crowned yet. But the trajectory is unmistakable.

What I'm Watching

Signals that confirm or invalidate the thesis.

Signals that confirm:

- Enterprise (non-lab) RL training volume crosses $500M ARR (2027)

- YC batch has 10+ pure RL infra companies in a single cohort

- One RLaaS platform hits $50M ARR

- NVIDIA expands ProRL to enterprise tier

- Major cloud provider (AWS/Azure/GCP) launches managed RL training service

Signals that invalidate:

- Synthetic self-play matches human RLHF quality across domains

- Labs build all infra in-house and stop outsourcing

- Agentic AI adoption stalls (enterprise deployment <20% by 2027)

- RL post-training proves less effective than alternative techniques (e.g., pure inference-time compute scaling)

The Bottom Line

RL-as-a-Service is where cloud infrastructure was in 2006–2008. The technique has been proven (ChatGPT, DeepSeek R1). The demand is exploding (agentic AI). The platform layer is forming (7 distinct verticals, 50+ companies). NVIDIA has entered. The labs are all buyers.

The question isn't whether RL infrastructure will be a massive market. It's who will build the platforms that own it.

The companies to watch: RunRL, AgileRL, Chakra Labs, Datacurve, Scale AI.

The gap to fill: The "Stripe for RL" — a horizontal platform that makes RL training an API call.

The timing: Now.